semantics from scans

This envisionment prototype shows how OCR can be used to help add semantic markup to scanned documents. This is specifically for situations where a level of expert judgement is needed. That is times when a fully automated solution is not required, but where we want to make the most of what the computer can do to help.

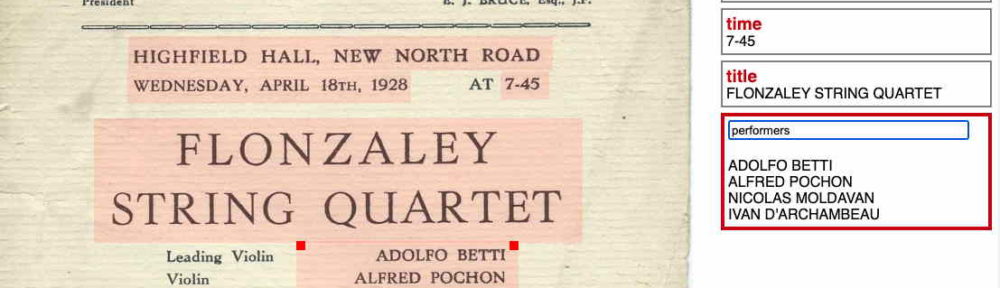

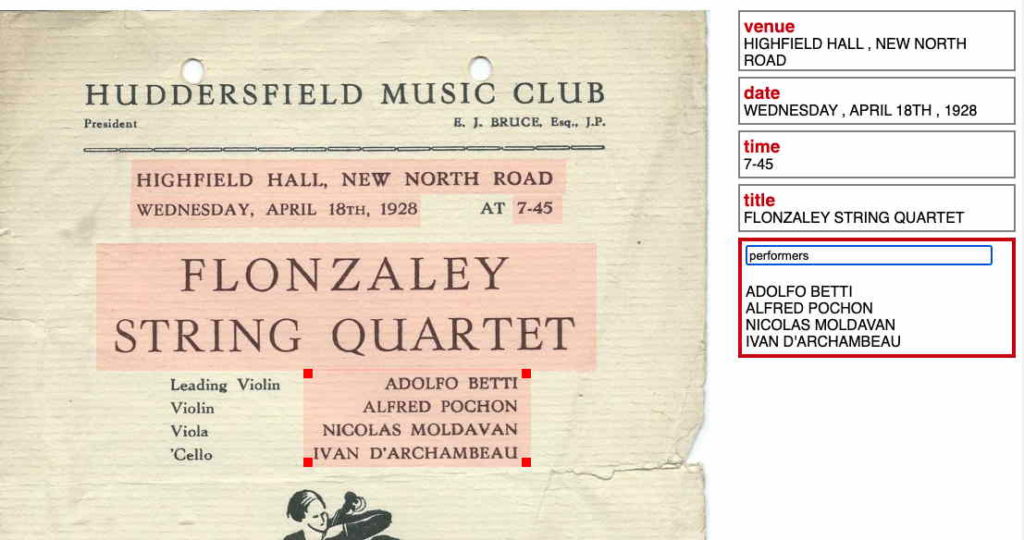

This prototype arose out of early discussions in the InterMusE project where we are working with concert programmes. The OCR Markup demo uses the output of Google Vision API as this provides the position of each word in the document. On its own OCR is useful to allow free text searching of large digitised collections. It is also possible to automatically identify common types of data such as dates, or people’s names. However, when a human looks at a document they might like to also identify the title of a concert, or who was performing.

The demo allows the user to select and name areas of the image and automatically extracts the OCR text for the region. The idea is that this can be done incrementally depending on the the purpose and goals of the user. For example, when first scanned an archivist may want to simply annotate key features such as the date, venue and title of the concert, Later a community member might be looking for references to a particular family of musicians, use free text search to find candidate documents and then markup relevant parts.

Here’s a short video of the prototype in action.

You can experiment with the prototype at:

https://datatodata.com/intermuse/experimental/ocrmarkup/

It doesn’t save anything, so feel free to play and just refresh the window to restart it.

If you’d like to try using OcrMarkup on a project of your own, there is a version where you can load your own images, save annotations locally, import, export, etc.:

https://datatodata.com/intermuse/ocrmarkup/demo/v001/#ocrmarkup

This is still experimental, but a little more functional.

See the description of the TalkOver demo for more details on using the list tab.