This fifth post in the REF Redux series looks at gender issue, in particular the likelihood that the apparent bias in computing REF results will disproportionately affect women in computing. While it is harder to find full data for this, a HEFCE post-REF report has already done a lot of the work.

Spoiler: REF results are exacerbating implicit gender bias in computing

A few weeks ago a female computing academic shared how she had been rejected for a job; in informal feedback she heard that her research area was ‘shrinking’. This seemed likely to be due to the REF sub-area profiles described in the first post of this series.

While this is a single example, I am aware that recruitment and investment decisions are already adjusting widely due to the REF results, so that any bias or unfairness in the results will have an impact ‘on the ground’.

In fact gender and other equality issues were explicitly addressed in the REF process, with submissions explicitly asked what equality processes, such as Athena Swan, they had in place.

This is set in the context of a large gender gap in computing. Despite there being more women undergraduate entrants than men overall, only 17.4% of computing first degree graduates are female and this has declined since 2005 (Guardian datablog based on HESA data). Similarly only about 20% of computing academics are female (“Equality in higher education: statistical report 2014“), and again this appears to be declining:

from “Equality in higher education: statistical report 2014”, table 1.6 “SET academic staff by subject area and age group”

The misbalance in terms of application rates for research funding has also been issue that the European Commission has investigated in “The gender challenge in research funding: Assessing the European national scenes“.

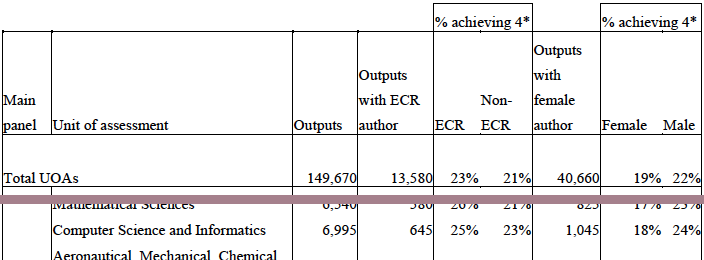

HEFCE commissioned a post-REF report “The Metric Tide: Report of the Independent Review of the Role of Metrics in Research Assessment and Management“, which includes substantial statistics concerning the REF results and models of fit to various metrics (not just citations). Helpfully, Fran Amery, Stephen Bates and Steve McKay used these to create a summary of “Gender & Early Career Researcher REF Gaps” in different academic areas. While far from the largest, Computer Science and Informatics is in joint third place in terms of the gender gap as measured by the 4* outputs.

Their data comes from the HEFCE report’s supplement on “Correlation analysis of REF2014 scores and metrics“, and in particular table B4 (page 75):

Extract of “Table B4 Summary of submitting authors by UOA and additional characteristics” from “The Metric Tide : Correlation analysis of REF2014 scores and metrics”

This shows that while 24% of outputs submitted by males were ranked 4*, only 18% of those submitted by females received a 4*. That is a male member of staff in computing is 33% more likely to get a 4* than a female.

Now this could be due to many factors, not least the relative dearth of female senior academics reported by HESA.(“Age and gender statistics for HE staff“).

extract of HESA graphic “Staff at UK HE providers by occupation, age and sex 2013/14” from “Age and gender statistics for HE staff”

However, the HEFCE report goes on to compare this result with metrics, in a similar way to my own analysis of subareas and institutional effects. The report states (my emphasis) that:

Female authors in main panel B were significantly less likely to achieve a 4* output than male authors with the same metrics ratings. When considered in the UOA models, women were significantly less likely to have 4* outputs than men whilst controlling for metric scores in the following UOAs: Psychology, Psychiatry and Neuroscience; Computer Science and Informatics; Architecture, Built Environment and Planning; Economics and Econometrics.

That is, for outputs that look equally good from metrics, those submitted by men are more likely to obtain a 4* than the by women.

Having been on the computing panel, I never encountered any incidents that would suggest any explicit gender bias. Personally speaking, although outputs were not anonymous, the only time I was aware of the gender of authors was when I already knew them professionally.

My belief is that these differences are more likely to have arisen from implicit bias, in terms of what is valued. The The Royal Society of Edinburgh report “Tapping our Talents” warns of the danger that “concepts of what constitutes ‘merit’ are socially constructed” and the EU report “Structural change in research institutions” talks of “Unconscious bias in assessing excellence“. In both cases the context is recruitment and promotion procedures, but the same may well be true of the way we asses the results of research.,

In previous posts I have outlined the way that the REF output ratings appear to selectively benefit theoretical areas compared with more applied and human-oriented ones, and old universities compared with new universities.

While I’ve not yet been able obtain numbers to estimate the effects, in my experience the areas disadvantaged by REF are precisely those which have a larger number of women. Also, again based on personal experience, I believe there are more women in new university computing departments than old university departments.

It is possible that these factors alone may account for the male–female differences, although this does not preclude an additional gender bias.

Furthermore, if, as seems the be the case, the REF sub-area profiles are being used to skew recruiting and investment decisions, then this means that women will be selectively disadvantaged in future, exacerbating the existing gender divide.

Note that this is not suggesting that recruitment decisions will be explicitly biased against women, but by unfairly favouring traditionally more male-dominated sub-areas of computing this will create or exacerbate an implicit gender bias.