The very nature of digital technology and AI breaks free markets leading to runaway inequality, even with the best intentions of industry … but some tech companies further exploit these effects.

This is the fifth of a series of blogs based on my keynote “The abomination of AI” at ICoSCI 2026. Each has an accompanying segment of the video and slides from the talk as well as detailed notes and references. Section numbers refer to the full report which will be released in the final blog. The slide thumbnails in the text correspond to the slides in the navigation panel below. The presentation can be played below, or opened full screen. The full length video, complete slides and further information can be found at: https://alandix.com/academic/talks/ICOSCI-2026-abomination-of-AI/

Previously …

§1. Every industry is driven by profits and power, but there is something about the nature of AI itself, which interacts with the nature of market forces in the world that is problematic and is different from other technologies.

§2. Can any technology be neutral? AI can be used for good purposes, such as advances in healthcare. It can also have bad outcomes such as bias in the criminal justice system or online exploitative pornography. Perhaps most often it is creating the frivolous or even ugly.

§3. The obvious impact of AI is in the things it does directly. Some technologies also change the very nature of society, affecting even those who do not use them. Cars are an obvious example. AI is also such a technology.

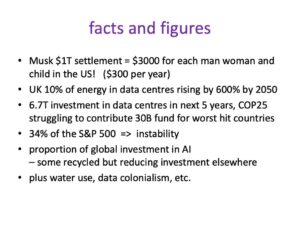

§4. Doomsayers worry about the point when AI becomes sentient, outgrowing its creators. The real danger is more insidious: the massive financial and human impacts of AI seem almost obscene.

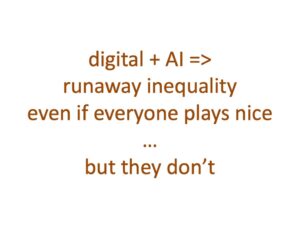

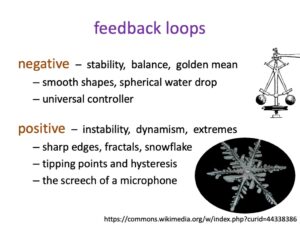

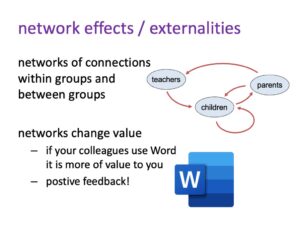

§5.1. §5.2. Network externalities, the way one person’s use of AI and digital tech changes its value for others, creates positive feedback loops, leading to runaway growth and emergent monopolies, the nemesis of free markets.

5.3 Digital and AI breaks market economics

So digital technology breaks market economics. Yet this is what our whole world is built on. Even countries that are not fully market economies, such as China, often rely upon market economics extensively, both internally and globally. Indeed, market economics has driven so nearly all of the late 20th century trade and much before that, including the industrial revolution. Now market economics has not been good for everything and certainly not for everybody, but it’s had elements of success. And now it is broken.

Digital technology breaks market economics and AI makes it worse.

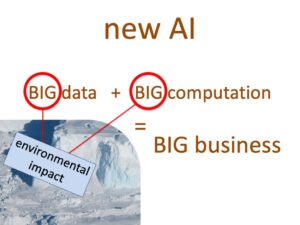

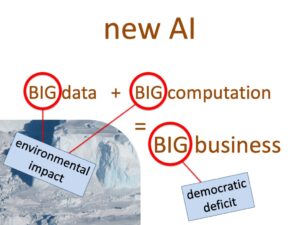

One of the ways that AI makes this worse is that the new AI, large language models and the like, are built on big data and big computation. This means that they require big business … really big business, a business that’s bigger than most countries, in order to get in the game.

But once you’re in that game, then you’ve got the, the volumes of people using your systems, generating more data, perhaps the, the power to, to leverage and try and encourage other people to give you data. For example, this can include governments giving certain companies, sometimes exclusive, access to public health data. And of course this then means the successful companies have the money to invest in more data centres to process that data.

Again, a positive feedback loop going there that’s exacerbated by the huge computational and data needs of AI.

And of course that has effects, it has environmental impact as seen in the data about energy and water use.

But also because the companies have to be so big, you end up with possibly a democratic deficit. This was very evident in America when you saw all the sort of the take the ‘tech bros’ surrounding Trump in the inauguration. Although there have to be some fallouts between some of them since, that power of big business has very evident. And that’s even in the US, smaller countries really have to struggle because the businesses are bigger than they are.

5.4 With the best will in the world …

So Digital plus AI, by its very nature, leads to runaway inequality. You have to work hard to stop that happening.

This doesn’t mean you can’t. As we discussed, in our body’s immune system, we have positive feedback loops that are important to fight infection. These would lead to autoimmune diseases if unchecked, but they are modified by negative feedback loops that control them. Similarly, the macro-economic feedback loops of digital technology and AI are not unstoppable, but the natural progression is just for them to keep on going.

Now this potentially runaway growth of AI happens even if everybody plays nice. It is not about evil owners of AI companies who are trying to control the world. With the best will in the world, this will happen.

But, of course, they don’t always have the best will in the world.

Some of the problem is baked into our commercial legal systems. In the UK, if you are on the board of directors of company, your legal responsibility is to your shareholders, which typically means profit maximisation. So even if you might have liked to do something better for society or the world, you are legally bound to do the thing that maximises profits.

So, the leaders of big AI are almost forced not to do the right thing, but it varies on the individuals how much they lean into that.

5.5 … Or not

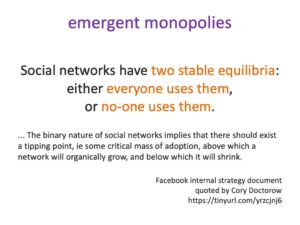

Facebook internal strategy document quoted by Cory Doctorow [D025]

In 2025 Meta, the owner of Facebook, was in the midst of an anti-trust case in the US regarding their takeover of Instagram in the early 2010s [Da25]. The US Government eventually lost their case against Meta, due largely to the emergence of TikTok as a competitor in the meantime However, as part of the case various internal Facebook documents came into the public domain. Cory Doctorow , the open software campaigner, quotes form one internal strategy document, which showed that Mark Zuckerberg and Facebook understood precisely the role of emergent digital monopolies:

“Social networks have two stable equilibria: either everyone uses them, or no-one uses them.” [Do25]

“… The binary nature of social networks implies that there should exist a tipping point, ie some critical mass of adoption, above which a network will organically grow, and below which it will shrink.”

Other emails show that this understanding did lead to very deliberate attempts stifle Instagram’s growth [Da25]. That is Facebook were very aware of network effects and the presence of tipping points, and prepared to use techniques to ensure that they are the side of that critical mass that they wanted to be.

These statements were made in a largely pre-AI context (at least on the way it is understood today), with regard to the role of emergent monopolies for social media, but of course intensified by AI. I’m sure Meta was not and is not alone in being aware of these effects and being prepared use them.

Coming next …

Part 6 – should we worry?

Runaway growth of AI is not painless – opportunity costs of investment and human costs of lost jobs. Gains may be transitory – buy-now-pay-later tech risk tying users into spiralling costs.

.

References

[Da25] David Dayen (2025). The Government Has Already Won the Meta Case. The American Prospect, April 16, 2025. https://prospect.org/2025/04/16/2025-04-16-government-already-won-meta-case-tiktok-ftc-zuckerberg/

[Do25] Cory Doctorow (2025).Mark Zuckerberg personally lost the Facebook antitrust case. Pluralistic. Apr 18, 2025. https://pluralistic.net/2025/04/18/chatty-zucky/