[An edited version of this post is reproduced at HCIbook online!]

I recently got a Facebook message from a researcher about to do a study who asked, “Do you think 5 (users) is enough?”

Along with Fitts’ Law and Miller’s 7+/-2 this “five is enough” must be among the most widely cited, and most widely misunderstood and misused aphorisms of HCI and usability. Indeed, I feel that this post belongs more in ‘Myth Busters” than in my blog.

So, do I think five is enough? Of course, the answer is (annoyingly), “it depends”, sometimes, yes, five is enough, but sometimes, fewer: one appropriate user, or maybe no users at all, and sometimes you need more, maybe many more: 20, 100, 1000. But even when five is enough, the reasons are usually not directly related to Nielsen and Landauer’s original paper, which people often cite (although rarely read) and Nielsen’s “Why You Only Need to Test With 5 Users” alert box (probably more often actually read … well at least the title).

The “it depends” is partly dependent on the particular circumstances of the situation (types of people involved, kind of phenomenon, etc.), and partly on the kind of question you want to ask. The latter is the most critical issue, as if you don’t know what you want to know, how can you know how many users you need?

There are several sorts of reasons for doing some sort of user study/experiment, several of which may apply:

1. To improve a user interface (formative evaluation)

2. To assess whether it is good enough (summative evaluation)

3. To answer some quantitative question such as “what % of users are able to successfully complete this task”

4. To verify or refute some hypothesis such as “does eye gaze follow Fitts’ Law”

5. To perform a broad qualitative investigation of an area

6. To explore some domain or the use of a product in order to gain insight

It is common to see HCI researchers very confused about these distinctions, and effectively perform formative/summative evaluation in research papers (1 or 2) where in fact one of 3-6 is what is really needed.

I’ll look at each in turn, but first to note that, to the extent there is empirical evidence for ‘five is enough”, it applies to the first of these only.

I dealt with this briefly in my paper “Human–Computer Interaction: a stable discipline, a nascent science, and the growth of the long tail” in the John Long Festschrift edition of IwC, and quote here:

In the case of the figure of five users, this was developed based on a combination of a mathematical model and empirical results (Nielsen and Landauer 1993). The figure of five users is:

(i) about the optimal cost/benefit point within an iterative development cycle, considerably more users are required for summative evaluation or where there is only the opportunity for a single formative evaluation stage;

(ii) an average over a number of projects and needs to be assessed on a system by system basis; and

(iii) based on a number of assumptions, in particular, independence of faults, that are more reasonable for more fully developed systems than for early prototypes, where one fault may mask another.

We’ll look at this in more detail below, but critically, the number ‘5’ is not a panacea, even for formative evaluation.

As important as the kind of question you are asking, are the kind of users you are using. So much of modern psychology is effectively the psychology of first year psychology undergraduates (in the in 1950s it was male prisoners). Is this representative? Does this matter? I’ll return to this at the end, but first of all look briefly at each kind of question.

Finally, there is perhaps the real question “will the reviewers of my work think five users is enough” — good publications vs. good science. The answer is that they will certainly be as influenced by the Myth of Five Users as you are, so do good science … but be prepared to need to educate your reviewers too!

formative evaluation – prototyping cycle

As noted formative evaluation was the scope of Nielsen and Landauer’s early work in 1993 that was then cited by Nielsen in his Alert Box in 2000, and which has now developed mythic status in the field.

The 1993 paper was assuming a context of iterative development where there would be many iterations, and asking how many users should be used per iteration, that is how many users should you test before fixing the problems found by those users, and then performing another cycle of user testing, and another. That is, in all cases they considered, the total number of users involved would be far more than five, it is just the number used in each iteration that was lower.

In order to calculate the optimal number of subjects to use per iteration, they looked at:

(i) the cost of performing a user evaluation

(ii) the number of new faults found (additional users will see many of the same faults, so there are diminishing returns)

(iii) the cost of a redevelopment cycle

All of these differ depending on the kind of project, so Nielsen and Landauer looked at a range of projects of differing levels of complexity. By putting them together, and combining with simple probabilistic models of bug finding in software, you can calculate an optimal number of users per experiment.

They found that, depending on the project, the statistics and costs varied and hence the optimal number of users/evaluators (between 7 and 21), with, on the whole, more complex projects (with more different kinds of bugs and more costly redevelopment cycles) having a higher optimal number than simpler projects. In fact all the numbers are larger than five, but five was the number in Nielsen’s earlier discount engineering paper, so the paper did some additional calculations that yielded a different kind of (lower) optimum (3.2 users — pity the last 0.2 user), with five somewhere between 7 and 3 … and a myth was born!

Today, with Web 2.0 and ‘perpetual beta’, agile methods and continuous deployment reduce redevelopment costs to near zero, and so Twidale and Marty argue for ‘extreme evaluation‘ where often one user may be enough (see also my IwC paper).

The number also varies through the development process; early on, one user (indeed using it yourself) will find many, many faults that need to be fixed. Later faults become more obscure, or maybe only surface after long-term use.

Of course, if you use some sort of expert or heuristic evaluation, then the answer may be no real users at all!

And anyway all of this is about ‘fault finding’, usability is not about bug fixing but making things better, it is not at all clear how useful, if at all, literature on bug fixing is for creating positive experiences.

summative evaluation – is it good enough to release

If you are faced with a product and want to ask “is it good enough?” (which can mean, “are there any usability ‘faults’?”, or, “do people want to use it?”), then five users is almost certainly not enough. To give yourself any confidence of coverage of the kinds of users and kinds of use situations, you may need tens or hundreds of users, very like hypothesis testing (below).

However, the answer here may also be zero users. If the end product is the result of a continuous evaluation process with one, five or some other number of users per iteration, then the number of users who have seen the product during this process may be sufficient, especially if you are effectively iterating towards a steady state where few or no new faults are found per iteration.

In fact, even when there has been a continuous process, the need for long-term evaluation becomes more critical as the development progresses, and maybe the distinction between summative and late-stage formative is moot.

But in the end there is only one user you need to satisfy — the CEO … ask Apple.

quantitative questions and hypothesis testing

(Note, there are real numbers here, but if you are a numerophobe never fear, the next part will go back to qualitative issues, so bear with it!)

Most researchers know that “five is enough” does not apply in experimental or quantitative studies … but that doesn’t always stop them quoting numbers back!

Happily in dealing with more quantitative questions or precise yes/no ones, we can look to some fairly solid statistical rules for the appropriate number of users for assessing different kinds of effects (but do note “the right kind of users” below). And yes, very, very occasionally five may be enough!

Let’s imagine that our hypothesis is that a behaviour will occur in 50% of users doing an experiment. With five users, the probability that we will see this behaviour in at least one user is 1 in 32, which is approximately 3%. That is if we do not observe the behaviour at all, then we have a statistically significant result at 5% level (p<0.05) and can reject the hypothesis.

Note that there is a crucial difference between a phenomenon that we expect to see in about 50% of user iterations (i.e. the same user will do it about 50% of the time) and one where we expect 50% of people to do it all of the time. The former we can deal with using a small number of users and maybe longer or repeated experiments, the latter needs more users.

If instead, we wanted to show that a behaviour happens less than 25% of the time, then we need at least 11 users, for 10% 29 users. On the other hand, if we hypothesised that a behaviour happens 90% of the time and didn’t see it in just two users we can reject the hypothesis at significance level of 1%. In the extreme if our hypothesis is that something never happens and we see it with just one user, or if the hypothesis is that it always happens and we fail to see it with one user, in both cases we can reject our hypothesis.

The above only pertains when you see samples where either none or all of the users do something. More often we are trying to assess some number. Rather than “does this behaviour occur 50% of the time”, we are asking “how often does this behaviour occur”.

Imagine we have 100 users (a lot more than five!), and notice that 60% do one thing and 40% do the opposite. Can we conclude that in general the first thing is more prevalent? The answer is yes, but only just. Where something is a simple yes/no or either/or choice and we have counted the replies, we have a binomial distribution. If we have n (100) users and the probability of them answering ‘yes’ is p (50% if there is no real preference), then the maths says that the average number of times we expect to see a ‘yes’ response is n x p = 100 x 0.5 = 50 people — fairly obvious. It also says that the standard deviation of this count is sqrt(n x p x (1-p ) ) = sqrt(25) = 5. As a rule of thumb if answers differ by more than 2 standard deviations from the expected value, then this is statistically significant; so 60 ‘yes’ answers vs. the expected 50 is significant at 5%, but 55 would have just been ‘in the noise’.

Now drop this down to 10 users and imagine you have 7 ‘yes’s and 3 ‘no’s. For these users, in this experiment, they answer ‘yes’ more than twice as often as ‘no’, but here this difference is still no more than we might expect by chance. You need at least 8 to 2 before you can say anything more. For five users even 4 to 1 is quite normal (try tossing five coins and see how many come up heads); only if all or none do something can you start to think you are onto something!

For more complex kinds of questions such as “how fast”, rather than “how often”, the statistics becomes a little more complex, and typically more users are needed to gain any level of confidence.

As a rule of thumb some psychologists talk of 20 users per condition, so if you are comparing 4 things then you need 80 users. However, this is just a rule of thumb and some phenomena have very high variability (e.g. problem solving) whereas others (such as low-level motor actions) are more repeatable for an individual and have more consistency between users. For phenomena with very high variability even 20 users per condition may be too few, although within subjects designs may help if possible. Pilot experiments or previous experiments concerning the same phenomenon are important, but this is probably the time to consult a statistician who can assess the statistical ‘power’ of a suggested design (the likelihood that it will reveal the issue of interest).

qualitative study

Here none of the above applies and … so … well … hmm how do you decide how many users? Often people rely on ‘professional judgement’, which is a posh way of saying “finger in the air”.

In fact, some of the numerical arguments above do still apply (sorry numerophobes). If as part of your qualitative study you are interested in a behaviour that you believe happens about half the time, then with five users you would be very unlucky not to observe it (3% of the time). Or put it another way, if you observe five users you will see around 97% of behaviours that at least half of all users have (with loads and loads of assumptions!).

If you are interested in rarer phenomena, then you need either lots more users (for behaviour that you only see in 1 in 10 users, then you have only a 40% chance of observing it with 5 users, and perhaps more surprisingly, only 65% chance of seeing it with 10 users).

However, if you are interested in a particular phenomenon, then randomly choosing people is not the way to go anyway, you are obviously going to select people who you feel are most likely to exhibit it; the aim is not to assess its prevalence in the world, but to find a few and see what happens.

Crucially when you generalise from qualitative results you do it differently.

Now in fact you will see many qualitative papers that add caveats to say “our results only apply to the group studied …”. This may be necessary to satisfy certain reviewers, but is at best disingenuous – if you really believe the results of your qualitative work do not generalise at all, then why are you publishing it – telling me things that I cannot use?

In fact, we do generalise from qualitative work, with care, noting the particular limitations of the groups studied, but still assume that the results are of use beyond the five, ten or one hundred people that we observed. However, we do not generalise through statistics, or from the raw data, but through reasoning that certain phenomena, even if only observed once, are likely to be ones that will be seen again, even if differing in details. We always generalise from our heads, not from data.

Whether it is one, five or more, by its nature deep qualitative data will involve fewer users than more shallow methods such as large scale experiments or surveys. I often find that the value of this kind of deep interpretative data is enhanced by seeing it alongside large-scale shallow data. For example, if survey or log data reveals that 70% of users have a particular problem and you observe two users having the same problem, then it is not unreasonable to assume that the reasons for the problem are similar to those of the large sample — yes you can generalise from two!

Indeed one user may be sufficient (as often happens with medical case histories, or business case studies), but often it is about getting enough users so that interesting things turn up.

exploratory study

This looking for interesting things is often the purpose of research: finding a problem to tackle. Once we have found an interesting issue, we may address it in many ways: formal experiments, design solutions, qualitative studies; but none of these are possible without something interesting to look at.

In such situations, as we saw with qualitative studies in general, the sole criteria for “is N enough” is whether you have learnt something.

If you want to see all, or most of the common phenomena, then you need lots of users. However, if you just want to find one interesting one, then you only need as many as gets you there. Furthermore whilst you often choose ‘representative or ‘typical’ users (what is a typical user!) for most kinds of study and evaluation, for exploratory analysis, often extreme users are most insightful; of course you have to work out whether your user or users are so unusual that the things you observe are unique to them … but again real research comes from the head, you have to think about it and make an assessment.

In the IwC paper I discuss some of the issues of single person studies in more detail and Fariza Razak’s thesis is all about this.

the right kind of users

If you have five, fifty or five hundred users, but they are all psychology undergraduates, they are not going to tell you much about usage by elderly users, or by young unemployed people who have left school without qualifications.

Again the results of research ultimately come from the head not the data: you will never get a complete typical, or representative sample of users; the crucial thing is to understand the nature of the users you are studying, and to make an assessment of whether the effects you see in them are relevant, and interesting more widely. If you are measuring reaction times, then education may not be a significant factor, but Game Boy use may be.

Many years ago I was approached by a political science PhD student. He had survey data from over 200 people (not just five!), and wanted to know how to calculate error bars to go on his graphs. This was easily done and I explained the procedure (a more systematic version of the short account given earlier). However, I was more interested in the selection of those 200 people. They were Members of Parliament; he had sent the survey to every MP (all 650 of them) and got over 200 replies, a 30% return rate, which is excellent for any survey. However, this was a self-selected group and so I was more interested in whether the grounds for self-selection influenced the answers than in how many of them there were. It is often the case that those with strong views on a topic are more likely to answer surveys on it. The procedure he had used was as good as possible, but, in order to be able to make any sort of statement about the interpretation of the data, he needed to make a judgement. Yet again knowledge is ultimately from the head not the data.

For small numbers of users these choices are far more critical. Do you try and choose a number of similar people, so you can contrast them, or very different so that you get a spread? There is no right answer, but if you imagine having done the study and interpreting the results this can often help you to see the best choice for your circumstances.

being practical

In reality whether choosing how many, or who, to study, we are driven by availability. It is nice to imagine that we make objective selections based on some optimal criteria — but life is not like that. In reality, the number and kind of users we study is determined by the number and kind of users we can recruit. The key thing is to understand the implications of these ‘choices’ and use these in your interpretation.

As a reviewer I would prefer honesty here, to know how and why users were selected so that I can assess the impact of this on the results. But that is a counsel of perfection, and again good science and getting published are not the same thing! Happily there are lovely euphemisms such as ‘convenience sample’ (who I could find) and ‘snowball sample’ (friends of friends, and friends of friends of friends), which allow honesty without insulting reviewers’ academic sensibilities.

in the end

Is five users enough? It depends: one, five, fifty or one thousand (Google test live with millions!). Think about what you want out of the study: numbers, ideas, faults to fix, and the confidence and coverage of issues you are interested in, and let that determine the number.

And, if I’ve not said it enough already, in the end good research comes from your head, from thinking and understanding the users, the questions you are posing, not from the fact that you had five users.

references

A. Dix (2010) Human-Computer Interaction: a stable discipline, a nascent science, and the growth of the long tail. Interacting with Computers, 22(1) pp. 13-27. http://www.hcibook.com/alan/papers/IwC-LongFsch-HCI-2010/

Nielsen, J. (1989). Usability engineering at a discount. In Salvendy, G., and Smith, M.J. (Eds.), Designing and Using Human–Computer Interfaces and Knowledge Based Systems, Elsevier Science Publishers, Amsterdam. 394-401.

Nielsen, J. and Landauer, T. K. 1993. A mathematical model of the finding of usability problems. In Proceedings of the INTERACT ’93 and CHI ’93 Conference on Human Factors in Computing Systems (Amsterdam, The Netherlands, April 24 ? 29, 1993). CHI ’93. ACM, New York, NY, 206?213. http://doi.acm.org/10.1145/169059.169166

Jakob Nielsen’s Alertbox, March 19, 2000: Why You Only Need to Test With 5 Users. http://www.useit.com/alertbox/20000319.html

Fariza Razak (2008). Single Person Study: Methodological Issues. PhD Thesis. Computing Department, Lancaster University, UK. February 2008. http://www.hcibook.net/people/Fariza/

Michael Twidale and Paul Marty (2004-) Extreme Evaluation. http://people.lis.uiuc.edu/~twidale/research/xe/

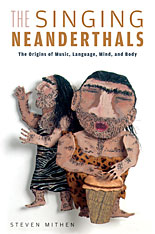

One of my birthday presents was Steven Mithin’s “The Singing Neanderthals” and, having been on holiday, I have already read it! I read Mithin’s “The Prehistory of the Mind” some years ago and have referred to it repeatedly over the years1, so was excited to receive this book, and it has not disappointed. I like his broad approach taking evidence from a variety of sources, as well as his own discipline of prehistory; in times when everyone claims to be cross-disciplinary, Mithin truly is.

One of my birthday presents was Steven Mithin’s “The Singing Neanderthals” and, having been on holiday, I have already read it! I read Mithin’s “The Prehistory of the Mind” some years ago and have referred to it repeatedly over the years1, so was excited to receive this book, and it has not disappointed. I like his broad approach taking evidence from a variety of sources, as well as his own discipline of prehistory; in times when everyone claims to be cross-disciplinary, Mithin truly is.