Government research funding policy in many countries, including the UK, has focused on centres of excellence, putting more funding into a few institutions and research groups who are creating the most valuable outputs.

Is this the best policy, and does evidence support it?

I’m prompted to write as Leonel Morgado (Facebook, web) shared a link to a 2013 PLOS ONE paper “Big Science vs. Little Science: How Scientific Impact Scales with Funding” by Jean-Michel Fortin and David Currie. The paper analyses work funded by Natural Sciences and Engineering Research Council of Canada (NSERC), and looked at size of grant vs. research outcomes. The paper demonstrates diminishing returns: large grants produce more research outcomes than smaller grants, but less per dollar spend. That is concentrating research funding appears to reduce the overall research output.

Of course, those obtaining research grants have all been through a highly competitive process, so the NSERC results may simply be a factor of the fact that we are already looking at the very top level of the research projects.

However, a report many years ago reinforces this story, and suggests it holds more broadly.

Sometime in the mid-late 1990s HEFCE the UK higher education funding agency, did a study where they ranked all universities against every simple research output metrics1. One of the outputs was the number of PhD completions and another was industrial research income (arguably whether an output!), but I forget the third.

Not surprisingly Oxford and Cambridge came top of the list when ranked by aggregate research output.

However, the speadsheet also included the amount of research money HEFCE paid into the university and a value-for-money column.

When ranked against value-for-money, the table was near reversed, with Oxford and Cambridge at the very bottom and Northampton University (not typically known as the peak of the university excellence ratings) was the top. That is HEFCE got more research output for pound spent at Northampton than anywhere else in the UK.

The UK REF2014 used an extensive and time-consuming peer-review mechanism to rank the research quality of each discipline in each UK university-level institution, on a 1* to 4* scale (4* being best). Funding is heavily ramped towards 4* (in England the weighting is 10:3:0:0 for 4*:3*:2*:1*). As part of the process, comprehensive funding information was produced for each unit of assessment (typically a department), including UK government income, European projects, charity and industrial funding.

So, we have an officially accepted assessment of research outcomes (that is government funds against it!), and also of the income that generated it.

At a public meeting following the 2014 exercise, I asked a senior person at HEFCE whether they planned to take the two and create a value for money metric, for example, the cost per 4* output.

There was a distinct lack of enthusiasm for the idea!

Furthermore, my analysis of REF measures vs citation metrics suggested that this very focused official funding model was further concentrated by an almost unbelievably extreme bias towards elite institutions in the grading: apparently equal work in terms of external metrics was ranked nearly an order of magnitude higher for ‘better’ institutions, leading to funding being around 2.5 times higher for some elite universities than objective measures would suggest.

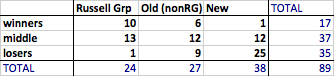

From “REF Redux 4 – institutional effects“: ‘winners’ are those with 25% or more than metrics would estimate, ‘losers’ those with 25% or more less.

In summary, the implications both from Fortin and Currie’s PLOS ONE paper and from the 1990s HEFCE report suggest spreading funding more widely would increase overall research outcomes, but both official policy and implicit review bias do the opposite.

- I recall reading this, but it was before the days when I rolled everything over on my computer, so can’t find the exact reference. If anyone recalls the name of the report, or has a copy, I would be very grateful.[back]